On April 15, Jensen Huang told Dwarkesh Patel that Trainium and TPU external growth was “one hundred percent Anthropic” — that Anthropic “is a unique instance, not a trend.”[1] The market read that as reassurance about Nvidia’s position.Yesterday’s pieceargued it was the opposite: a concession, on the record, that the entire alt-silicon market is one customer, and that the customer exists because its equity-for-compute need lined up with a hyperscaler that had both the silicon and the checkbook.

Six days later, and nine days before earnings, Amazon priced that concession.

Today’s announcement pushes the investment structure to a potential cumulative position of $33 billion: Amazon invests $5 billion in Anthropic now and up to $20 billion more “tied to certain commercial milestones,” on top of the $8 billion already committed.[2] What the $25 billion of incremental equity buys, under the contract, is one word:primary.

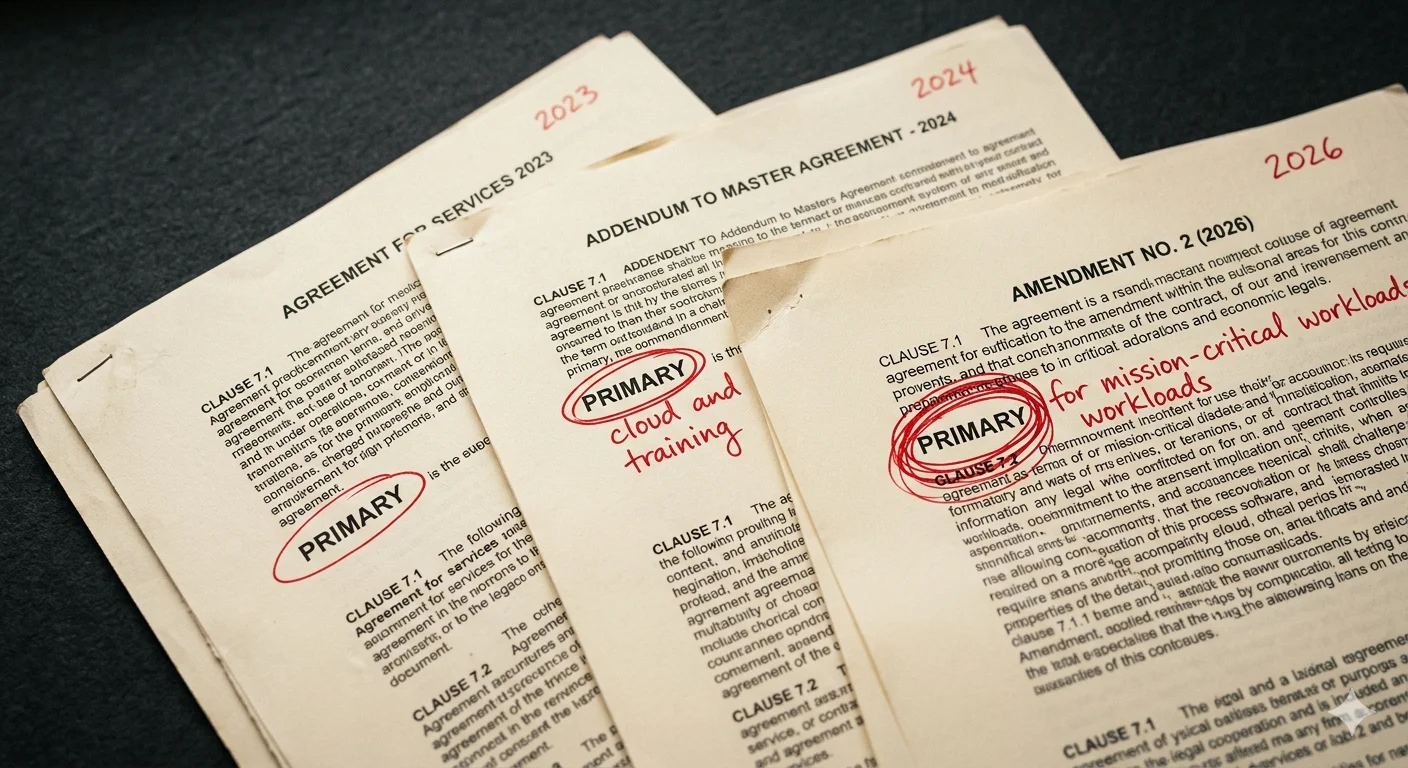

Three rounds, three times the same word

September 2023:Amazon invests $4 billion. Anthropic names AWS “its primary cloud provider.”[3]

November 2024:Amazon invests another $4 billion. Anthropic names AWS “our primary cloud and training partner.”[4]

April 2026:Amazon invests $5 billion now, with up to $20 billion more contingent on milestones. Anthropic’s language: “We continue to choose AWS as our primary training and cloud provider for mission-critical workloads.”[5]

Three rounds. Three times the same word. At $4 billion cumulative, “primary.” At $8 billion cumulative, “primary.” At up to $33 billion cumulative, still “primary” — now narrowed by a qualifier that did not exist in either previous agreement. “For mission-critical workloads” is not a deepening of exclusivity. It is a ring-fence around which AWS retains claim to workloads, and, by extension, which it does not.

The reason the ring-fence appears in this round and not the previous two is disclosed in the same document: Claude is now “the only frontier AI model available to customers on all three of the world’s largest cloud platforms: AWS (Bedrock), Google Cloud (Vertex AI), and Microsoft Azure (Foundry).”[5] “Primary” in 2026 refers to one of three formally first-class hyperscaler relationships; in 2023, it referred to the only such relationship.

The triangulation mechanics

The $25 billion reads as defensive spending when compared with the two deals that preceded it.

On November 18, 2025, Microsoft invested up to $5 billion in Anthropic, and Nvidia committed up to $10 billion, against a $30 billion Anthropic commitment to Azure compute and up to 1 GW of Grace Blackwell and Vera Rubin capacity.[6] On April 6, 2026, Broadcom disclosed in an 8-K that Anthropic had committed to approximately 3.5 gigawatts (GW) of Google TPU capacity starting in 2027, on top of the 1 GW already coming online in 2026 through the October 2025 Google Cloud agreement.[7] Mizuho estimates Broadcom’s Anthropic-attributed revenue at $21 billion in 2026 and $42 billion in 2027.[8]

Amazon’s response, three weeks later, commits up to $33 billion in cumulative equity against $100 billion in Anthropic AWS spend over 10 years and 5 GW of Trainium capacity, with roughly 1 GW expected by the end of 2026.[2] Across the three hyperscalers, Anthropic now holds up to $40 billion in potential equity commitments against compute spend exceeding $130 billion flowing back to them.

AWS remains primary by the measure that matters operationally — 5 GW is five times the initial Azure commitment and one-and-a-half times the 2027 Google ramp. That is what Jassy’s quote points to, and it is the real commercial substance: capacity, Claude Platform native integration inside AWS accounts, and the Annapurna engineering loop on Trainium chip design.[2] None of that is nothing. It is also not what Amazon has historically paid for. The equity-to-capacity ratio has moved from $4 billion per “primary cloud provider” to $4 billion per “primary cloud and training partner,” and now up to $25 billion per “primary training and cloud provider for mission-critical workloads.” Capacity scaled. The word narrowed.

The round-trip is affordable for each hyperscaler because of the other side of Anthropic’s balance sheet. Anthropic disclosed today that run-rate revenue has “surpassed $30 billion, up from approximately $9 billion at the end of 2025” — a 3.3x expansion in four months.[5] At that growth rate, losing primary status to Azure or Google Cloud is not a loss of a billion-dollar customer. It is a tens-of-billions-per-year revenue stream compounding on the capex already committed.[9]

What the $20 billion actually is

The $20 billion tranche is not an investment commitment. It is a commercial-milestone-gated option on further equity issuance, with the milestones undisclosed in today’s filing. Broadcom’s April 8-K used parallel language from the other direction: “The consumption of such expanded AI compute capacity by Anthropic is dependent on Anthropic’s continued commercial success.”[7]

Both sides of every deal in the triangle are now demand-contingent. The equity flows if Anthropic keeps compounding. The compute flows if Anthropic keeps compounding. The hyperscaler revenue flows if Anthropic keeps compounding. None of it flows if the $9 billion to $30 billion trajectory from end-2025 to April-2026 is a pull-forward rather than a durable curve.

That is the unstated reason “for mission-critical workloads” appeared in the 2026 language. If growth continues, the qualifier is decorative — everything becomes mission-critical. If growth pauses, the qualifier is that Anthropic retains operational flexibility to shift non-critical workloads across the three clouds based on price and capacity, which is exactly what a compute buyer with three first-class supplier relationships should do.

The qualifier hovers over a second distribution boundary that took shape two weeks earlier. Amazon Bedrock launched Claude Mythos Preview on April 7 as a “gated research preview” — available only in the US East (N. Virginia) region and only to allow-listed organizations.[10] Anthropic declined to make Mythos generally available, citing its autonomous hacking capabilities. Internal testing surfaced a 27-year-old OpenBSD vulnerability and chained Linux kernel privilege-escalation exploits, among other findings across major operating systems and browsers.[11]

Eight days after the Bedrock launch, the European Commission opened a formal inquiry into the model, invoking the EU general-purpose AI Code of Practice that Anthropic has signed: assessment obligations apply to services “that may or may not be offered in Europe.”[12] The “meaningful expansion of international inference in Asia and Europe” that today’s Amazon press release cites applies to the standard Claude product line. Mythos — the most mission-critical workload Anthropic ships — sits inside Bedrock but outside that expansion. “Primary” is now narrowing on three axes: the linguistic qualifier in the contract, the product-geography tiering that restricts the frontier cybersecurity model to a single US region within Bedrock, and the regulatory boundary that keeps that tier out of the EU.

Jensen’s concession, priced

Yesterday’s piece argued that custom AI silicon is additive to Nvidia rather than substitutive because the only external customer who matters is Anthropic — and Anthropic exists because of a difficult-to-replicate capital-structure arrangement. Today’s announcement confirms the diagnosis from both sides. Trainium’s external pull-through is so concentrated in Anthropic that Amazon paid up to $25 billion in incremental equity to hold contract language already in place at $8 billion cumulative, plus a narrowing qualifier. The lab, which was a structural anomaly in November 2024, has extracted up to $40 billion in potential hyperscaler equity in six months, even as compute commitments exceed $130 billion. The capital-structure arrangement that built the one customer is now the default funding model for the labs behind the leaders.

Yesterday’s piece closed on who the next Anthropic would be. Today prices the first one: three rounds of “primary,” a narrowing to “mission-critical,” a second tier carved out at the product-geography boundary, and $33 billion of potential equity held by a single hyperscaler that cannot afford to let the relationship downgrade.

The market had all day Monday to read the Amazon press release as a capital raise. The number it should read instead is $25 billion — the price of a word, in a document that simultaneously narrows the word’s scope.

Notes

[1]“Jensen Huang – TPU competition, why we should sell chips to China, & Nvidia’s supply chain moat,”Dwarkesh Podcast, April 15, 2026. Huang stated that Anthropic “is a unique instance, not a trend” and that Trainium and TPU external growth is “one hundred percent Anthropic.” See“Anthropic Is Not a Trend,”The AI Realist, April 20, 2026, for the Additive-vs-Substitutive Test framework and the capital-structure argument referenced throughout this piece.

[2]“Amazon and Anthropic expand strategic collaboration,”Amazon, April 21, 2026. Structure: $5 billion disbursed now, up to $20 billion more “tied to certain commercial milestones,” on top of the previously committed $8 billion. Commercial commitment: Anthropic to spend more than $100 billion on AWS technologies over ten years, including Trainium2, Trainium3, Trainium4, and future generations; up to 5 GW capacity. Claude Platform on AWS provides native Anthropic console access through AWS accounts without additional credentials or billing relationships. The Annapurna engineering collaboration is described in the same document as daily communication between the Anthropic and AWS engineering teams regarding the Trainium chip design.

[3]“Expanding access to safer AI with Amazon,”Anthropic, September 25, 2023. Original announcement of $4 billion with AWS as “primary cloud provider.” Language is precise and worth preserving in quotation: “primary cloud provider,” not “exclusive” and not “sole.” Google was already an Anthropic investor at this time; the “primary cloud provider” designation was formally applied to AWS alone.

[4]“Powering the next generation of AI development with AWS,”Anthropic, November 22, 2024. An additional $4 billion, bringing the cumulative total to $8 billion. AWS designation upgraded to “primary cloud and training partner.” The Trainium training commitment appears here for the first time.

[5]“Anthropic and Amazon expand collaboration for up to 5 gigawatts of new compute,”Anthropic, April 20, 2026 (dated one day prior to the Amazon press release). Full quote: “We continue to choose AWS as our primary training and cloud provider for mission-critical workloads.” The “mission-critical workloads” qualifier is new in the 2026 language and does not appear in the 2023 or 2024 announcements. The triple-hyperscaler framing (”Claude remains the only frontier AI model available to customers on all three of the world’s largest cloud platforms”) is also new. Revenue disclosure: “Our run-rate revenue has now surpassed $30 billion, up from approximately $9 billion at the end of 2025.” Consumer strain disclosure: Our unprecedented consumer growth, in particular, has impacted reliability and performance for free, Pro, Max, and Team users, especially during peak hours.” — Tthe operational case for capacity expansion sits alongside the capital-structure analysis and does not displace it.

[6]“Microsoft, NVIDIA and Anthropic announce strategic partnerships,”Microsoft Official Blog, November 18, 2025. Anthropic commits to $30 billion of Azure compute capacity and up to 1 GW of capacity on Nvidia Grace Blackwell and Vera Rubin systems. Microsoft invests up to $5 billion; Nvidia invests up to $10 billion. Anthropic valuation at the time of this deal: approximately $350 billion, up from $183 billion in September 2025. See alsoCNBC coverageof the valuation trajectory.

[7]Broadcom Form 8-K,filed April 6, 2026. Direct quote: “Anthropic, beginning in 2027, will access through Broadcom approximately 3.5 gigawatts as part of the multiple gigawatts of nex- generation TPU-based AI compute capacity committed by Anthropic. The consumption of such expanded AI compute capacity by Anthropic is dependent on Anthropic’s continued commercial success.” This is the disclosure that formalizes demand-contingency on the compute side, mirroring the milestone-gating on Amazon’s $20 billion tranche.

[8]“Broadcom agrees to expanded chip deals with Google, Anthropic,”CNBC, April 6, 2026. Mizuho analyst Vijay Rakesh estimates: $21 billion in Broadcom AI revenue from Anthropic in 2026, $42 billion in 2027. Broadcom’s role isa TPU manufacturing partner to Google; the Anthropic relationship routes through Google Cloud with Broadcom as the silicon supplier.

[9] Amazon’s 2026 capital expenditure guidance of approximately $200 billion was disclosed on the Q4 2025 earnings call in February 2026, as referenced by CNBC in“Amazon to invest up to another $25 billion in Anthropic,”April 20, 2026. The $5 billion disbursed today is roughly 2.5 percent of guided 2026 capex; the $25 billion incremental equity ceiling, spread over the unknown milestone period, would represent a materially smaller annualized percentage. The comparison is given to scale the current-year cash impact, not to conflate multi-year equity ceilings with annualized capex.

[10]“Amazon Bedrock now offers Claude Mythos Preview (Gated Research Preview),”AWS What’s New, April 7, 2026. AWS description: Mythos Preview is available only in the US East (N. Virginia) region through Amazon Bedrock, with access “limited to an initial allow-list of organizations.” AWS frames the release posture as a “deliberately cautious approach to release, prioritizing internet-critical companies and open-source maintainers.”

[11]“Claude Mythos Preview and Project Glasswing,”Anthropic, April 7, 2026. Anthropic announced Mythos on the same day as the Bedrock launch, citing a material capability jump over Claude Opus 4.6 and declining to make the model generally available. The core Project Glasswing partners are eleven organizations (Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft, Nvidia, and Palo Alto Networks), with approximately forty organizations total in the broader testing cohort. Anthropic is providing up to $100 million in usage credits to Glasswing partners and $4 million to open-source security organizations. Technical details of the autonomous vulnerability discovery — including the twenty-seven-year-old OpenBSD bug, chained Linux kernel privilege-escalation exploits, and a browser sandbox escape — are documented in the“Mythos Preview Technical Report,”Anthropic Frontier Red Team, April 2026.

[12]“Anthropic talks to EU, including on its cyber security models, Commission says,”Reuters, April 17, 2026. European Commission spokesman Thomas Regnier confirmed the April 15 briefing and invoked the EU general-purpose AI Code of Practice, to which Anthropic is a signatory: “In this framework, there is an obligation to assess and mitigate risks that could come from a service that may or may not be offered in Europe.” See also“Anthropic Briefs EU Regulators on Mythos Cybersecurity Concerns,”PYMNTS, April 17, 2026, citing Agence France-Presse coverage of the same briefing.